Master VLA Models & World Models for Robotics

An 8-lecture hands-on bootcamp where you'll learn to build, deploy, and experiment with Vision-Language-Action models, Diffusion Policy, and World Models on a real SO-101 robot arm. From first principles to real hardware.

Models You'll Master

Taught by MIT, Purdue & IIT Alumni

Diffusion Policy

46.9% improvement

World Models

Interactive Simulation

The Frontier of Robot Intelligence

These are the models and techniques you'll master in this bootcamp — from Physical Intelligence's Pi0 to Columbia's Diffusion Policy to interactive World Models.

Videos courtesy of Physical Intelligence, Columbia University, and Toyota Research Institute.

Why This Bootcamp?

The robotics industry is being reshaped by AI. Hardware companies are partnering with intelligence providers. Learning to build that intelligence layer is the key to the future.

Hardware Alone Isn't Enough

Building a robot is only half the battle. Without intelligent vision-language-action models, robots are just expensive arms that follow scripted motions. The future belongs to robots that can see, understand, and act.

The Intelligence Layer Is the Moat

Companies like Physical Intelligence, Moonlake AI, and Google DeepMind are racing to build the AI brains for robots. Hardware is becoming commoditized. The intelligence layer — VLA models, world models, diffusion policies — is where the real value lies.

Integration Is Hard — That's the Point

Many believe plugging AI into a robot is straightforward. It's not. From real-time inference on edge devices to handling multi-modal inputs to robust policy deployment — this bootcamp teaches you the skills that actually matter.

What You'll Learn

Three cutting-edge areas of robot intelligence — from first principles to real hardware deployment.

Vision-Language-Action Models

Pi0 · SmolVLA · OpenVLA

Learn how VLA models take visual observations and language instructions to produce robot actions. You'll study three cutting-edge architectures — Pi0 from Physical Intelligence, SmolVLA (a compact open-source VLA), and OpenVLA — understanding their design, training, and deployment.

- How VLA models bridge perception and action

- Pi0: Physical Intelligence's flagship model

- SmolVLA: Efficient VLA for edge deployment

- OpenVLA: Open-source VLA for research & production

- Fine-tuning VLAs on custom robot tasks

Diffusion Policy

46.9% improvement over prior methods

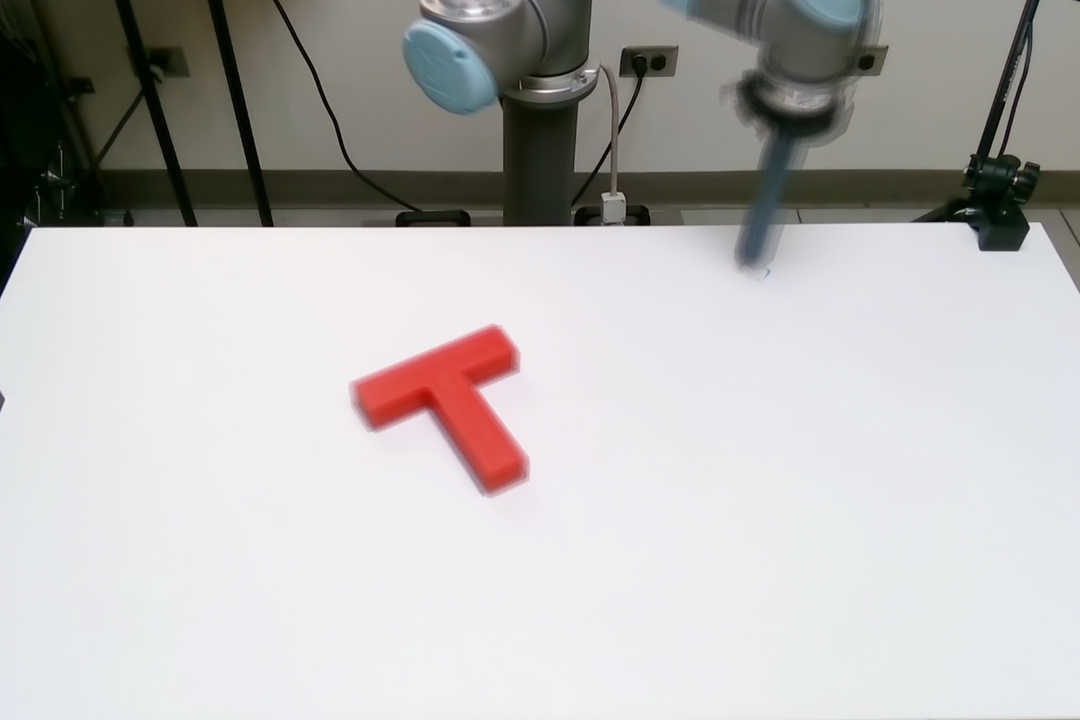

Master Diffusion Policy — a breakthrough approach that represents robot behavior as a conditional denoising diffusion process. Instead of predicting single actions, it learns action distributions, enabling robots to handle multi-modal behaviors and complex manipulation tasks.

- Denoising diffusion for action generation

- Handling multi-modal action distributions

- Receding horizon control for temporal reasoning

- Training on real-world demonstration data

- Deploying on manipulation tasks: pushing, grasping, pouring

World Models

The Future of Robotics Simulation

Explore world models that simulate robot environments without physics engines — using AI to predict what happens next. Learn how companies like Moonlake AI are building interactive generative worlds for training, evaluation, and deployment of robotic systems.

- Action-conditioned video prediction models

- Interactive world simulation from pixel space

- Training policies entirely in simulation

- Sim-to-real transfer that actually works

- Moonlake AI's vision for generative worlds

Real Hardware, Real Experiments

Deploy on a Real SO-101 Robot Arm

This isn't a simulation-only bootcamp. You'll work with a real 6-DOF SO-101 robot arm — collecting demonstrations, training VLA and diffusion policies, and watching your models control the arm in real-time.

SO-101 Robot Arm

Hands-on experiments with a real 6-DOF robot arm. Learn to collect demonstrations, train policies, and deploy AI models that make the arm perform real manipulation tasks.

Local Edge Inference

Run VLA model inference locally. Learn edge deployment techniques, model optimization, and real-time control — all running on your own hardware.

End-to-End Pipeline

Build the complete stack: data collection, model training, simulation testing, real robot deployment. Not just theory — you'll ship working robot intelligence.

Built for Everyone — From Scratch

Whether you're a student or a professional, this bootcamp starts from the fundamentals and takes you to deploying AI on real robots.

Engineering Students

You want to break into robotics & AI. This bootcamp teaches you the most in-demand skills — VLA models, diffusion policies, and real robot deployment — from scratch.

Software Engineers

You write code and want to enter the robotics AI space. Learn to build the intelligence layer that hardware companies are desperate for.

ML / AI Researchers

You work on ML/AI and want to apply your skills to embodied intelligence. Understand how VLAs, diffusion policies, and world models work under the hood.

Industry Professionals

You're in robotics or manufacturing and need to understand the AI revolution. Learn what's possible, what's practical, and how to implement it.

The Complete Roadmap

8 lectures every Saturday, 8 AM – 10 AM IST. Progressive complexity from foundations to deploying AI on real robots.

The New Era of Robot Intelligence

- Why AI + Robotics changes everything

- The intelligence layer: from scripted motions to learned behaviors

- Hardware companies partnering with AI providers

- Overview of imitation learning and robot learning paradigms

- Setting up your development environment

Vision-Language Foundations

- CLIP, SigLIP, and visual representation learning

- How vision encoders extract features for robot control

- Language grounding: connecting text instructions to visual observations

- Multi-modal embeddings for robotic tasks

- Hands-on: building a vision-language feature extractor

Pi0 — Physical Intelligence's VLA Model

- Deep dive into the Pi0 architecture

- How Pi0 fuses vision, language, and action

- Flow matching for continuous action generation

- Pre-training strategies and data requirements

- Hands-on: running Pi0 inference on manipulation tasks

SmolVLA & OpenVLA — Open-Source VLAs

- SmolVLA: compact VLA model design for edge deployment

- OpenVLA: open-source VLA for research and production

- Comparing VLA architectures: trade-offs and design choices

- Fine-tuning VLAs on custom tasks and environments

- Hands-on: fine-tune a VLA model on your own demonstration data

Diffusion Policy for Robotic Manipulation

- Diffusion models crash course: from image generation to action generation

- Conditional denoising diffusion for robot control

- Multi-modal action distributions and receding horizon control

- Training Diffusion Policy on demonstration data

- Hands-on: train and deploy a Diffusion Policy for manipulation

World Models — Simulating Robot Environments

- Action-conditioned video prediction without physics engines

- Interactive world simulation from pixel space

- Training and evaluating policies in simulated worlds

- Sim-to-real transfer: making simulation results work on real robots

- Moonlake AI and the future of generative worlds for robotics

SO-101 Arm Integration & Real Robot Experiments

- Setting up the SO-101 robot arm for AI control

- Data collection: recording human demonstrations

- Deploying VLA models and Diffusion Policy on the real arm

- Real-time inference pipeline on local hardware

- Hands-on: end-to-end deployment on real hardware

Edge Deployment & Capstone Project

- Local inference optimization and edge deployment

- Model quantization and latency optimization for real-time control

- Building a complete robot intelligence pipeline

- Capstone: end-to-end project — train, simulate, deploy

- The future roadmap: where VLAs and world models are heading

From Our First Bootcamp

Our first Robot Learning Bootcamp covered Imitation Learning, VAEs, Transformers, and ACT Policy. Watch the Demo Day — real projects our students built and deployed on the SO-101 robot arm.

Everything Included

Not just lectures — a complete robot intelligence toolkit.

8 Live Lecture Recordings

Full HD recordings of all 8 lectures with chapter markers. Watch, rewatch, and learn at your own pace.

Complete Code Repository

All source code, notebooks, model configs, and deployment scripts. Yours to keep forever.

Hand-Written Notes & PDFs

Detailed lecture notes, architecture diagrams, and reference PDFs for every lecture.

Trained Robot Policies

Pre-trained VLA models, Diffusion Policies, and world models ready to deploy on the SO-101 arm.

Edge Deployment Pipeline

Complete local inference setup — from model optimization to real-time robot control.

Community Access

Join a community of robot learning enthusiasts. Get help, share projects, and collaborate.

Course Certificate

Official Vizuara AI Labs certificate upon course completion.

This is Version 2.0

We ran the first Modern Robot Learning Bootcamp where students built and deployed AI models on real SO-101 robots. Version 2.0 goes deeper — covering VLA models, Diffusion Policy, and World Models.

See What V1 Students Built →Learn from MIT, Purdue & IIT Alumni

Manning best-selling authors who build AI systems and publish research — not just talk about them.

Dr. Rajat Dandekar

Co-founder, Vizuara AI Labs

PhD from Purdue University, B.Tech & M.Tech from IIT Madras. Dr. Rajat brings deep expertise in reinforcement learning and reasoning models, focusing on advanced AI techniques for real-world robotics applications.

Dr. Raj Dandekar

Co-founder, Vizuara AI Labs

PhD from MIT, B.Tech from IIT Madras. Dr. Raj specializes in building AI systems from scratch — from LLMs to robot learning models. His expertise spans scientific machine learning, VLA architectures, and end-to-end robotics deployment.

Manning #1 Best-Seller

Build a DeepSeek Model (From Scratch)

By Dr. Rajat Dandekar, Dr. Raj Dandekar, Dr. Sreedath Panat & Naman Dwivedi

What Our Alumni Say

Hear from professionals who enrolled in our previous bootcamps.

“I had been the student of Raj for two courses, the Generative AI Fundamentals and also the Building LLM from Scratch. Personally, this journey was absolutely enlightening for me because of very unique pedagogy that Raj follows in his teaching style. First is his approach is absolutely no nonsense. He goes to the details of a working code and explains everybody on how actually the entire concept is working on grassroots. A great hands-on experience, lot of practical sessions, and above all, a lot of focus on understanding and building things from scratch.”

Samrat Kar

Software Engineering Manager, Boeing

“I recently participated in Vizuara's live courses and was thoroughly impressed. Unlike self-paced YouTube learning, the live interactive sessions provided immediate clarification of doubts, vibrant discussions with peers, and engaging interactions with industry professionals. The course content, delivered by Dr. Raj, was exceptionally detailed, covering technical intricacies and practical coding exercises. I highly recommend this course to anyone eager to advance their knowledge.”

Aman Kesarwani

Quantitative Researcher, Caxton Associates, NY. Ex JP-Morgan

“I really love the course. Especially the interaction between the instructor, Dr. Raj and the students. Explored the concepts in depth. Also the assignments helped me experiment and iterate and really understand how things work under the hood. If you are really serious about learning these concepts from scratch, this is the course for you. I highly recommend it.”

Kiran Bandhakavi

Product Manager, Navy Federal Credit Union

“This bootcamp was one of the most complete and intuition-building journeys I've taken. Huge credit to the instructors — rare combo of research-grade depth + crystal-clear teaching. The long-form, 'code-along like a real class' style made the ideas stick.”

Sri Aditya Deevi

Robotics + Computer Vision Researcher

“By far the longest and most rewarding bootcamp I have ever taken. One of the biggest highlights for me was going from just reading about concepts to actually implementing them from scratch. That hands-on process gave me insights I could never get from theory alone. By the end, I felt confident building my own models, which once felt far out of reach.”

Koti Reddy

Software Developer

“I thoroughly enjoyed this course and found it extremely valuable. The hands-on coding sessions that followed the theory were particularly helpful in strengthening my understanding. I wanted to differentiate myself by learning something more specialized, and this course exceeded my expectations. I would highly recommend it.”

Lalatendu Sahu

AI Professional

Invest in Robot Intelligence

Choose the plan that fits your learning goals.

Engineer

Everything you need to master VLA models and robot learning.

- Access to all 8 live lectures

- Complete lecture recordings

- Community forum access

- Complete code repository & notebooks

- Hand-written notes & PDFs

- Pre-trained model checkpoints

- Deployment scripts & configs

- Course certificate

Industry Professional

For professionals targeting AI robotics research publication.

- Everything in Engineer

- 2 months personalized research guidance

- Custom research problem statement

- Research project roadmap document

- Guidance toward publishable manuscript

- Targeting leading robotics conferences

- 1-on-1 mentorship sessions

Enterprise

Bulk enrollment for teams and organizations.

- Everything in Industry Professional

- Bulk team enrollment

- Invoice-based payment

- Custom training modules

Enrolled in the Minor in Robotics?

Students who have enrolled in our Minor in Robotics program get this bootcamp completely free. The Minor in Robotics is a comprehensive program covering the full spectrum of modern robotics.

Learn About the Minor in Robotics →Frequently Asked Questions

Everything you need to know about the bootcamp.

Ready to Build Intelligent Robots?

Join the next cohort starting March 21 and learn to build, train, and deploy VLA models on real robot hardware. 8 weeks. From scratch.